Artwork credits to the amazing and illustrious Secret Pikachu.

Recently, EZKL and Optimism announced a partnership to prove the correctness of the Retroactive Public Goods Funding results. We’ve piloted the proof with Round 3 of Retroactive Public Goods Funding, an allocation worth nearly 30MM OP at announcement to Optimism contributors.

We’ve spent the past few weeks building a platform for verifying the results. The TLDR;

- The Retroactive Public Goods Funding process ideally improves with each round via governance feedback

- We audited the latest iteration — and here’s a cryptographic proof you can verify

- This paves the way for an end-to-end automated process with private voting, checks and balances on the allocation algorithm, and smart contract enabled allocation delivery

For stakeholders, this audit means many things:

- For badgeholders, your vote was fairly counted

- For recipients, your allocation was fairly calculated

- For everyone else, the results actually correspond with Optimism governance — governance at work!

We’ll walk through how this works and what it means.

Designing Retroactive Funding

In 2021, the Optimism team first proposed the retroactive public goods funding (RetroPGF) mechanism. It presented an alternative approach to proactive funding around a simple core principle: it’s easier to agree on what was useful than what will be useful.

This takes a stab at the nonprofit, open source dilemma. Without a path to profitability, these projects are typically ineligible for upfront venture capital. However, misaligned incentives shouldn’t imply that these projects can’t be rewarded. Oftentimes, the highest-impact initiatives can drive sustainable, positive externalities.

Retroactive Funding offers that light at the end of the tunnel. It does so by recognizing the gap between the profit earned by project contributors and the project’s actual impact (value created) — and rewards contributors for that gap. This is the impact = profit framework.

This is a general framework — but with Optimism specifically, there’s a confluence of three factors that make Retroactive Funding work in practice:

- a source of rewards (from OP token allocation and surplus protocol revenue)

- a possibility of deferred rewards (software and internet-based initiatives require little upfront capital investment)

- a robust community willing to participate in impact evaluation.

A core tenet of the Optimism community is agile iteration. That means the design of Round 3 looks much different from that of Round 1. We see that Round 3 heavily indexes on the impact evaluation framework, and as a both qualitative and quantitative measure, heavily relies on the judgement of voters (badgeholders).

Votes are currently fed through an allocation algorithm, published publicly by the Optimism team. The team announces the algorithm’s results at the conclusion of a round, as well as learnings to iterate upon for the next rounds.

The trickiest bit is effectively measuring impact. Reliance on quantitative metrics risks exclusion. Reliance of qualitative feedback risks human biases among other coordination challenges. And there are many more tradeoffs beyond these. Consequently, the algorithm and overarching design continues to evolve and evoke discussion.

The integrity challenge

One of the main tradeoffs for Retroactive Funding is related to privacy.

For such a large pool of OP rewards, transparency is important. We want to ensure results are aligned with previous governance decisions. More importantly, this recognizes that many projects depend on the allocation for sustainability.

However, badgeholder votes are private in Retroactive Funding. A longstanding feature of voting systems, this allows voters to express their true preferences without fear of retaliation, coercion, or pressure from others. It makes it difficult to execute vote buying, and encourages honest participation.

This presents a key challenge: verifying that allocation outcomes are truly based on badgeholder votes. Even though the allocation algorithm is public (and we can check that it accurately codifies forum promises), we can’t run a check without the input data. In the worst case, we can’t guarantee that the provided allocation algorithm was even used or that all votes were counted.

The zero knowledge solution

Making this process transparent requires both a proof that legitimate votes were counted and that the allocation algorithm was run as intended, whilst keeping the votes themselves private. This is exactly the sort of proof zero-knowledge (ZK) cryptography was designed to enable — and why we’re so excited to showcase this project as a hallmark use of the technology.

There are two places we apply ZKP:

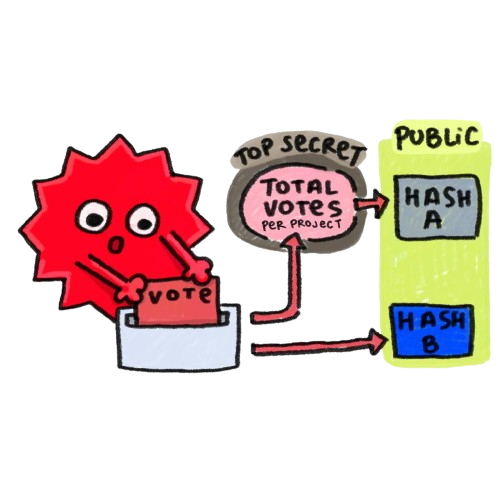

1. Verifying the voting process

The first circuit verifies that the cast ballots are legitimate, and that all legitimate ballots are counted. Ballots are legitimate if signed by a badgeholder from the public whitelist.

The details:

- Check the ballot signatures against the whitelist.

- For each project, create a hash of all its legitimate ballots. This is denoted as Hash A.

- Aggregate the hashes to generate an overall proof. This proof means that across all projects, all legitimate votes were counted.

This results in Proof A.

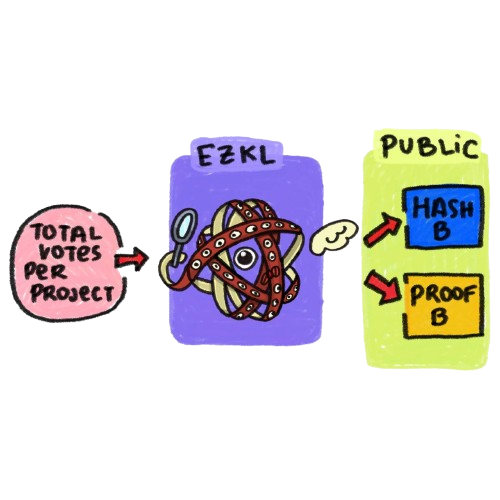

2. Checking the allocation algorithm

The second circuit verifies the usage of the allocation algorithm.

The details:

- For each project, we repeat steps 1 and 2 of the first circuit. This, however, is denoted by Hash B. We will check that Hash A = Hash B out of circuit.

- Generate a proof over the allocation algorithm. The algorithm takes in a ballot table as input, and outputs an allocation table. We only need one proof of the whole algorithm.

The results in Proof B.

3. Combining the proofs

Since we generate the proofs separately, we want to link them together cryptographically. This ensures there is not tampering of votes in between proofs. We link them by checking that the hashes of legitimate votes, Hash A and Hash B, are the same. This is the same hash you see on the platform, just in QR form.

Everyone’s verification matters

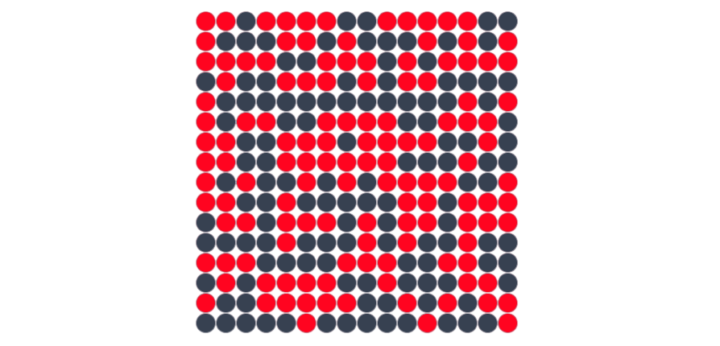

This is where you come in! When you press verify, you check the allocation was correct for a given project. You can also verify the allocation for the entire RetroPGF.

Even though the proofs are for all projects, each proof has a set of public variables particular to a given project. In Proof A this is the hash of the votes (Hash A), in Proof B this is the hash of the votes (Hash B) and the allocation amount for a given project. If we change any of those variables, we break verification for a particular project. Try it yourself!

If you’re neither a badgeholder nor a recipient, why hit the verify button? Simply put, verifying the results of the round contributes to the security and legitimacy of the process.

Blockchains have both social consensus (which fork is the main one, which client should be run, etc.) and computational consensus. A smart contract can enforce a set of rules, but humans decide which copy of the smart contract is the canonical one. Election workers count ballots, but citizens recognize the result. Similarly, by verifying the RetroPGF results for one or more projects, you help solidify the social consensus around the legitimacy of the vote. You are an election observer, contributing to a successful audit.

Improving the system

This is just the first step in auditing and automating the Retroactive Funding process. In the future, it is possible to create more comprehensive systems and even automate the process end-to-end. This may look something like:

- Algorithm implemented and formally verified against specifications in governance forums

- Votes checked against whitelist of badgeholders via a proof of legitimacy

- Upon verification, votes run through the allocation algorithm

- Final allocation checked via a proof of calculation

- Upon final verification, distribution of funds automatically

This also makes it easier to reflect and analyze voter behavior without revealing information about any one badgeholder — facilitating incremental improvement for the next round.

Implementation takeaways

You can find the code for this here and here. We briefly discuss some details which may require background knowledge on zero-knowledge cryptography.

Allocation algorithm

Optimism’s allocation algorithm was written in Python, which made the EZKL python library a natural fit for in-program conversion of the data science into zero-knowledge proofs. You’ll also notice that OP allocation votes are cast with floating point amounts — this meant a very low tolerance for imprecision.

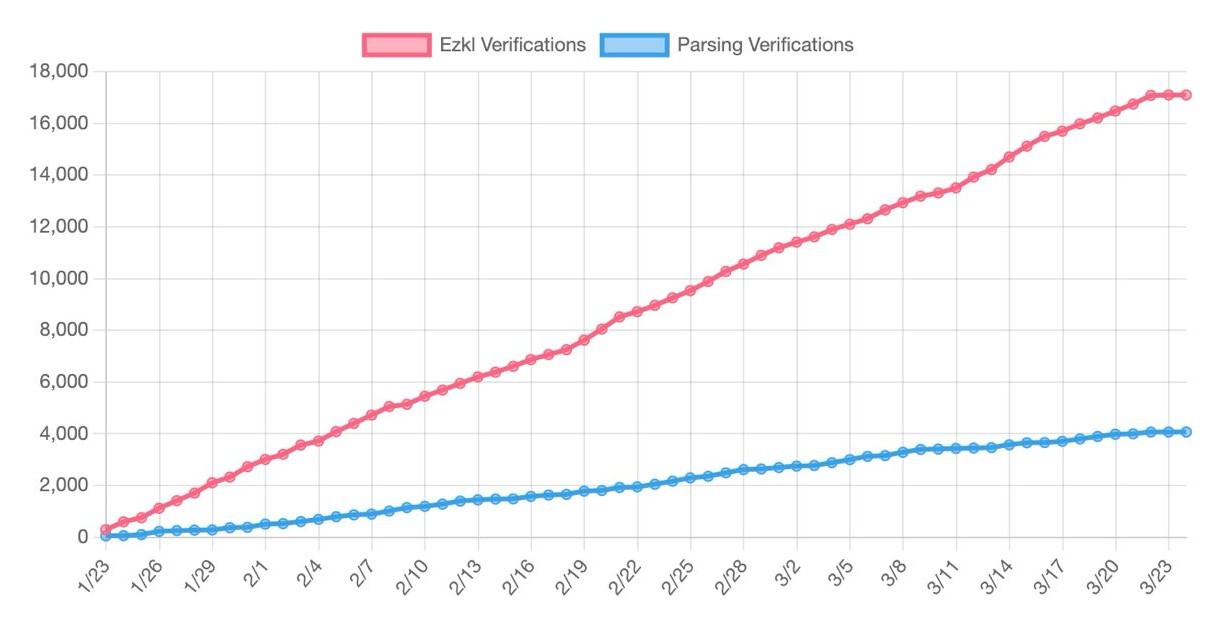

EZKL already optimizes heavily for precision given the frequency of nonlinear operations (reciprocals, softmax, etc.) in data science and machine learning models. However, we also made substantial improvements to the core engine, and specifically how we perform lookups, in order to retain proof size while increasing numerical accuracy. Incidentally, proving performance for other models like random forests and transformers improved significantly (sometimes over 90%). A win-win!

Signature verification

Signature verification / REGEX processing is not currently a part EZKL’s capabilities. We therefore leveraged the RISC Zero zkVM to build the first circuit. It’s stack provided the flexibility to roll out a proving pipeline in a short period of time. However, the current program is largely unoptimized for the virtual machine — this meant proving time took much longer and pre-compressed proof size was much larger than the EZKL proof on an absolute basis. We selected RISC Zero over SP1 due to the current lack of bindings to metal / CUDA, and due to even larger proof size.

Zero-knowledge systems

There’s remains much room for improvement on the zero-knowledge frontend. There are many potential approaches, whether it be optimizing our code for zkVMs, building a thinner VM specifically for ballot and JSON parsing (a-la zkemail), or even improving the ballot format and signature scheme format such that a REGEX-optimized frontend (instead of a VM) is sufficient!

Additionally, this application presents opportunities to implement multi-primitive systems. For example, private votes could be stored in a trusted execution environment (TEE) or alternative hardware module. Cryptographic primitives are not mutually exclusive, and zero-knowledge systems are compatible with additional security measures.

Overall, this project showcases the versatility of modern zero-knowledge tooling. It took a day to build the EZKL circuits and less than a week to design the RISC Zero circuits. Thanks to hashing and other creative commitments, it’s entirely feasible to link very different proving systems together into one cohesive application.

Final remarks

We hope this project inspires other public goods funding initiatives towards improved transparency with integrable zero knowledge infrastructure. This project also holds broader implications to both governance processes and financial promises.

- For funds with LPs or compliance requirements, it is possible to prove adherence to certain portfolio qualities and compliance satisfaction without revealing alpha.

- For entities with active treasuries, it is possible to prove adherence to particular asset baskets and the health of algorithmic management models.

- For software platforms with substantial capital flows, it is possible to prove the health of algorithmic parameter adjustments and circuit breakers.

These are just a few examples of provable guarantees that don’t leak any sensitive information.

Working with a principled team like Optimism has also been a great honor for us, and we’re excited to see what’s next.